The guidelines for screening women for breast cancer are a bit confusing. The American Cancer Society recommends annual mammograms for women older than 45 years with average risk, but other groups like the U.S. Preventative Services Task Force (USPSTF) recommend less aggressive breast screening.

This controversy centers on mammography’s frequent false-positive detections — or false alarms — which lead to unnecessary stress, additional imaging exams and biopsies. USPSTF argues that the harms of early and frequent mammography outweigh the benefits.

However, a recent Stanford study suggests a better way to reduce these false alarms without increasing the number of missed cancers. Using over 112,000 mammography cases collected from 13 radiologists across two teaching hospitals, the researchers developed and tested a machine-learning model that could help radiologists improve their mammography practice.

Each mammography case included the radiologist’s observations and diagnostic classification from the mammogram, the patient’s risk factors and the “ground-truth” of whether or not the patient had breast cancer based on follow-up procedures. The researchers used the data to train and evaluate their computer model.

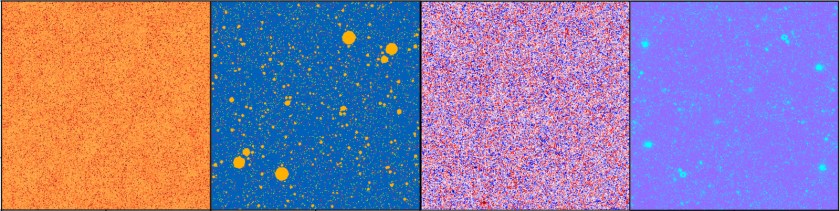

They compared the radiologists’ performance against their machine-learning model, doing a separate analysis for each of the 13 radiologists. They found significant variability among radiologists.

Based on accepted clinical guidelines, radiologists should recommend follow-up imaging or a biopsy when a mammographic finding has a two percent probability of being malignant. However, the Stanford study found participating radiologists used a threshold that varied from 0.6 to 3.0%. In the future, similar quantitative observations could be used to identify sources of variability and to improve radiologist training, the paper said.

The study included 1,214 malignant cases, which represents 1.1 percent of the total number. Overall, the radiologists reported 176 false negatives indicating cancers missed at the time of the mammograms. They also reported 12,476 false positives or false alarms. In comparison, the machine-learning model missed one additional cancer but it decreased the number of false alarms by 3,612 cases relative to the radiologists’ assessment.

The study concluded: “Our results show that we can significantly reduce screening mammography false positives with a minimal increase in false negatives.”

However, their computer model was developed using data from 1999 to 2010, the era of analog film mammography. In future work, the researchers plan to update the computer algorithm to use the newer descriptors and classifications for digital mammography and three-dimensional breast tomosynthesis.

Ross Shachter, PhD, a Stanford associate professor of management science and engineering and lead author on the paper, summarized in a recent Stanford Engineering news release, “Our approach demonstrates the potential to help all radiologists, even experts, perform better.”

This is a reposting of my Scope blog story, courtesy of Stanford School of Medicine.