Almost every day, news outlets report on highly infectious COVID-19 variants threatening to sneak past the front-line antibody defenses developed by our bodies after vaccination or previous infection. That’s because the coronavirus strain responsible for COVID-19, SARS-CoV-2, is doing what most viruses do: evolving and naturally selecting toward becoming more resistant to vaccines and antiviral drugs.

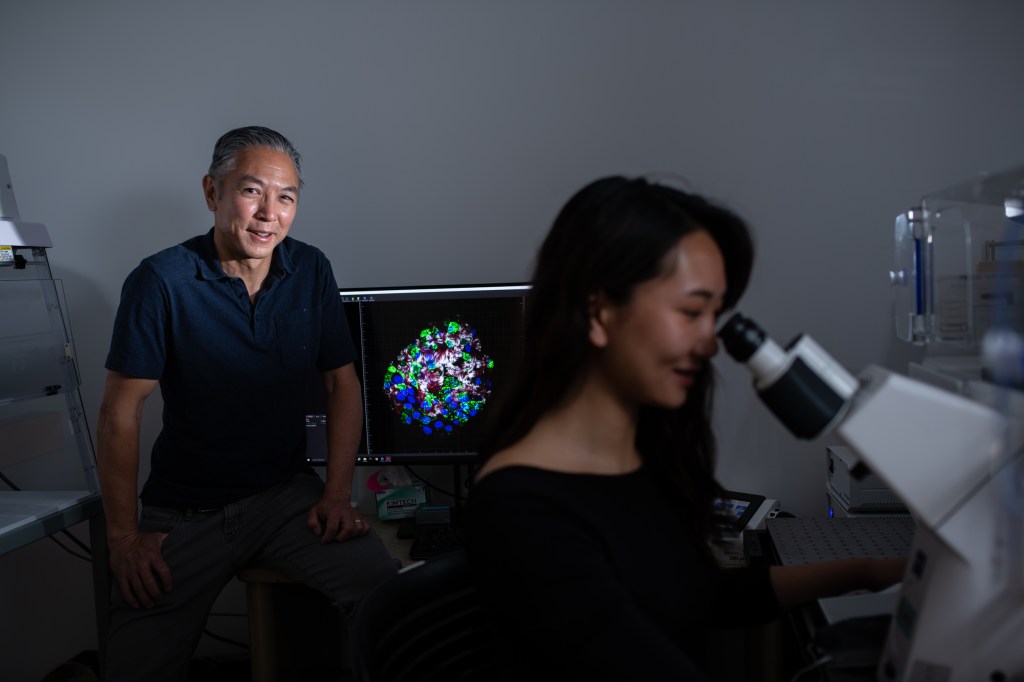

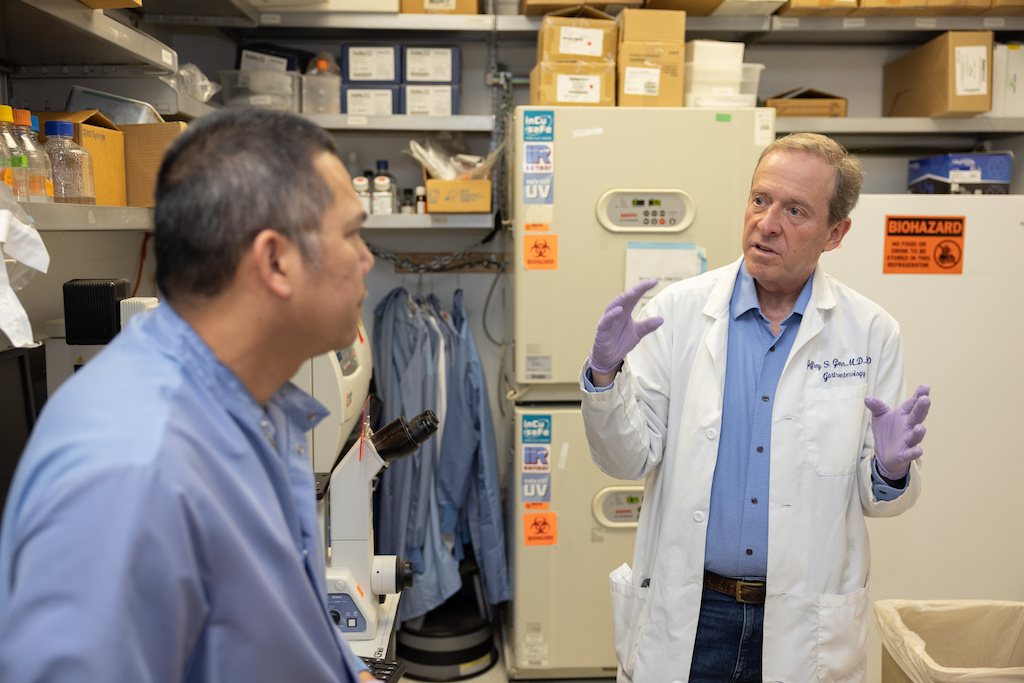

This isn’t surprising to Stanford researcher Jeffrey Glenn, MD, PhD, professor of medicine and of microbiology and immunology, who has spent years developing novel antiviral therapies for hepatitis, influenza, and enteroviruses. Fortunately, he and his international collaborators quickly pivoted and applied their expertise to COVID-19 too.

“When all of Stanford was shut down, we were considered essential. In fact, we’d never been busier. We worked 24/7 in shifts, wearing masks and social distancing,” describes Glenn, the Joseph D. Grant Professor. “This is what we’ve trained our whole lives to do — help develop drugs that could counter this and future pandemics. It’s an honor and privilege to do this work.”

Glenn’s research focuses on two approaches for creating antivirals for various diseases. The first strategy targets factors in the host that the virus depends on. The second one targets the structure of the virus itself.

Targeting Factors in the Host to Treat Hepatitis

Although viruses mutate quickly, they rely on their hosts’ cells to reproduce. So, researchers are developing host-targeting drugs. These are novel antivirals that interfere with host factors essential for the life cycle of the virus or that boost the host’s innate immunity. For example, some antivirals target specific proteins in the host to prevent the virus from replicating its genome inside the host’s cells.

Host-targeting drugs have several advantages. They act on something in the host that isn’t under the genetic control of the virus, Glenn explains, so it’s much harder for the virus to mutate, escape the drug, and still be viable.

“Another advantage is in the biology,” he says. “If one virus has evolved to depend on a particular host factor, many other viruses may have too. So, you can create a broad-spectrum antiviral therapy: one drug for multiple bugs.”

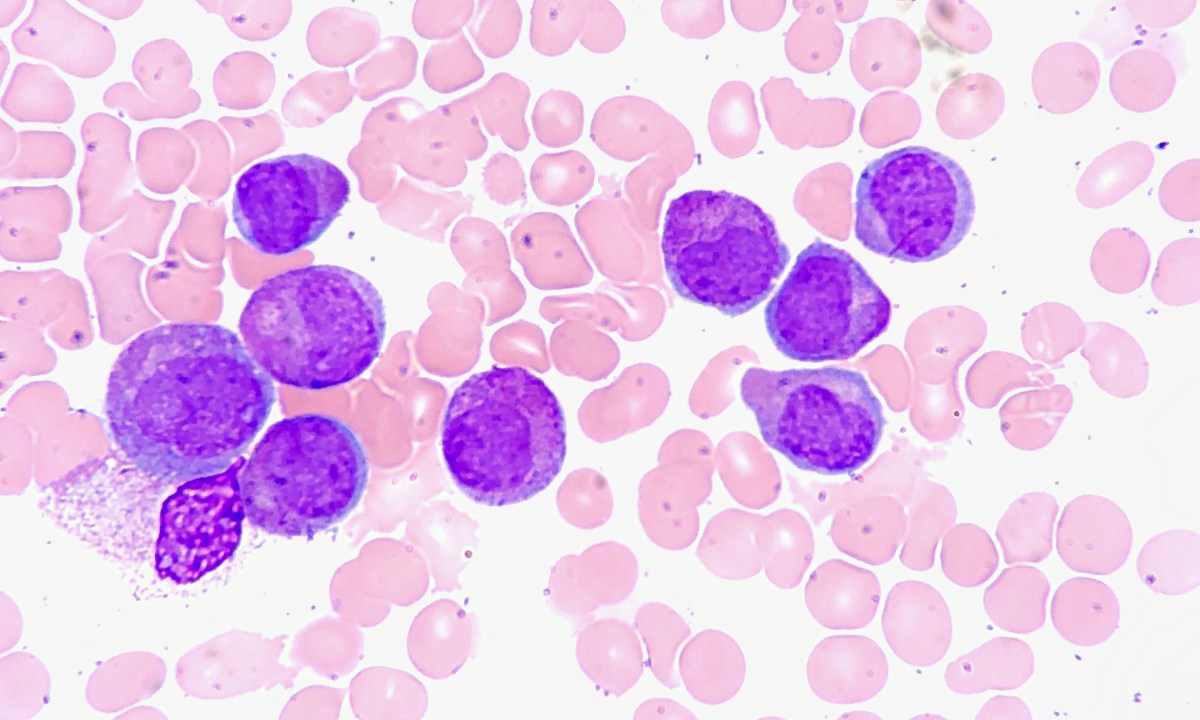

Glenn’s team pursued this strategy for hepatitis delta, the most severe form of viral hepatitis.

First they discovered a specific process occurring inside a host’s liver cells that the virus depends on. Then they performed animal studies and human clinical trials to test the safety and effectiveness of treating hepatitis delta with lonafarnib, a drug originally designed to treat various cancers. They demonstrated that lonafarnib inhibits the identified host-cell process and prevents the virus from replicating.

“Our phase 2 trial showed no evidence of drug resistance — one of the first examples in humans to validate this advantage of a host-targeting drug,” Glenn says. “A company that I founded, Eiger Biopharmaceuticals, is completing by year’s end a phase 3 trial. Hopefully, lonafarnib will become the first oral drug approved by the U.S. Food and Drug Administration (FDA) for hepatitis delta based on that data.”

Glenn takes this success to heart, as evidenced by a photograph on his cell phone of three Turkish young men standing together in Ankara, where the study was conducted. “They are the first three patients in history to have their hepatitis delta virus become undetectable from lonafarnib,” he says. “There is nothing cooler for a physician-scientist than seeing something you’ve made actually make a difference in patients’ lives.”

Lonafarnib also demonstrates the potential advantage of using host-targeting drugs for nonviral applications. The FDA has approved the drug to treat Hutchinson-Gilford progeria syndrome, a rare genetic condition that causes children to prematurely age and die, and lonafarnib was shown to prolong their lives.

Pivoting to Treat COVID-19

Glenn and his collaborators have developed other host-targeting drugs — including peginterferon lambda, which was originally designed to treat hepatitis delta by boosting a host’s immune system.

When the pandemic hit, they realized peginterferon lambda may be the perfect drug to treat COVID-19, because it is a broad-spectrum antiviral that targets the body’s first line of defense against viruses. Importantly, it had already been safely given to more than 3,000 patients in 20 different clinical trials, mostly treating chronic hepatitis, for which it is administered weekly for up to a year, he says.

Since Eiger Biopharmaceuticals wasn’t funded for COVID-19 studies, it made peginterferon lambda available at no cost to outside researchers. Glenn’s colleagues responded with tremendous interest within minutes of getting his email offer. Stanford was the first site to finish a phase 2, randomized, placebo-controlled clinical trial, but other studies soon followed, including one in Toronto.

These phase 2 trials treated COVID-19 outpatients. Collectively, they showed a single dose of peginterferon lambda was well tolerated and significantly reduced the amount of SARS-CoV-2 virus in the nasal passages — particularly for patients who initially had a high level of detectable virus, Glenn explains.

Next, his colleagues in Brazil performed a large, randomized, placebo-controlled outcomes study to evaluate the effectiveness of peginterferon lambda. This TOGETHER Trial uses an adaptive trial design that analyzes data as it emerges rather than waiting until the end of the study, saving valuable time and money. The study ran from June 2021 to February 2022.

Even though the majority of more than 1,900 patients enrolled were vaccinated, a single dose of peginterferon lambda reduced the number of COVID-related hospitalizations by 51% and deaths by 61%, as reported in a Grand Rounds presentation. For unvaccinated patients treated early, there was an 89% reduction in COVID-19 hospitalizations or death. And it worked across all variants, including omicron.

“This has been a frustrating journey in the sense that I know this drug could have saved millions of lives if we had it ready at the beginning of the pandemic,” says Glenn. “But it can still save many lives. The phase 3 study is done, and hopefully that’ll be the basis of an emergency use authorization before the end of this year.”

Once approved, peginterferon lambda could be used on its own or in combination with Pfizer’s Paxlovid, an antiviral with a different underlying mechanism. Giving both antivirals together could help prevent drug resistance to Paxlovid from developing, says Glenn.

Glenn is also looking beyond COVID-19 treatment uses for the drug, believing it should work against influenza and other viruses too. In the future, he envisions a patient with a respiratory virus getting a shot of peginterferon lambda at a clinic, going home, and having the doctors sort out later which virus caused the infection.

Targeting RNA Structures in the Virus

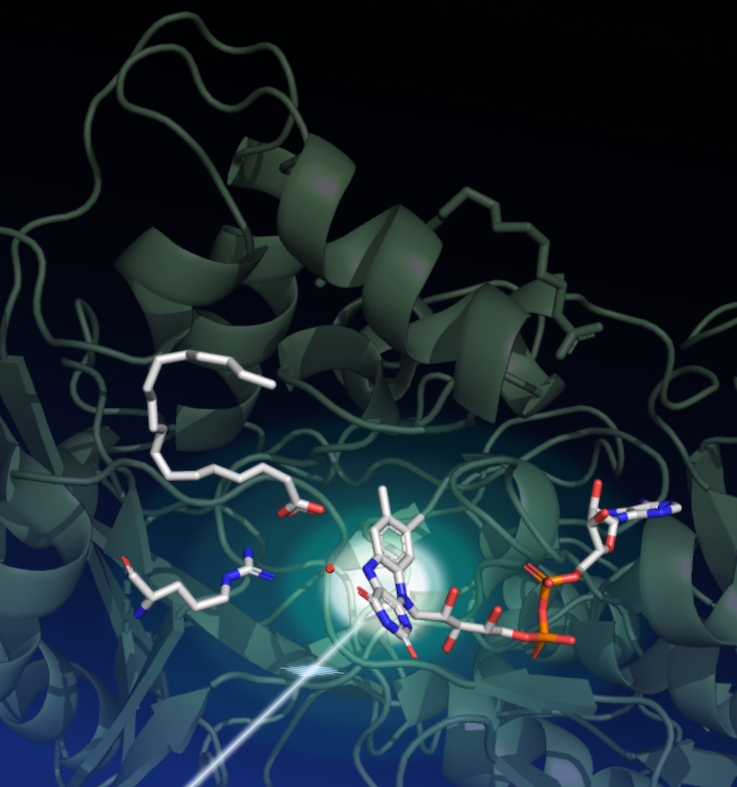

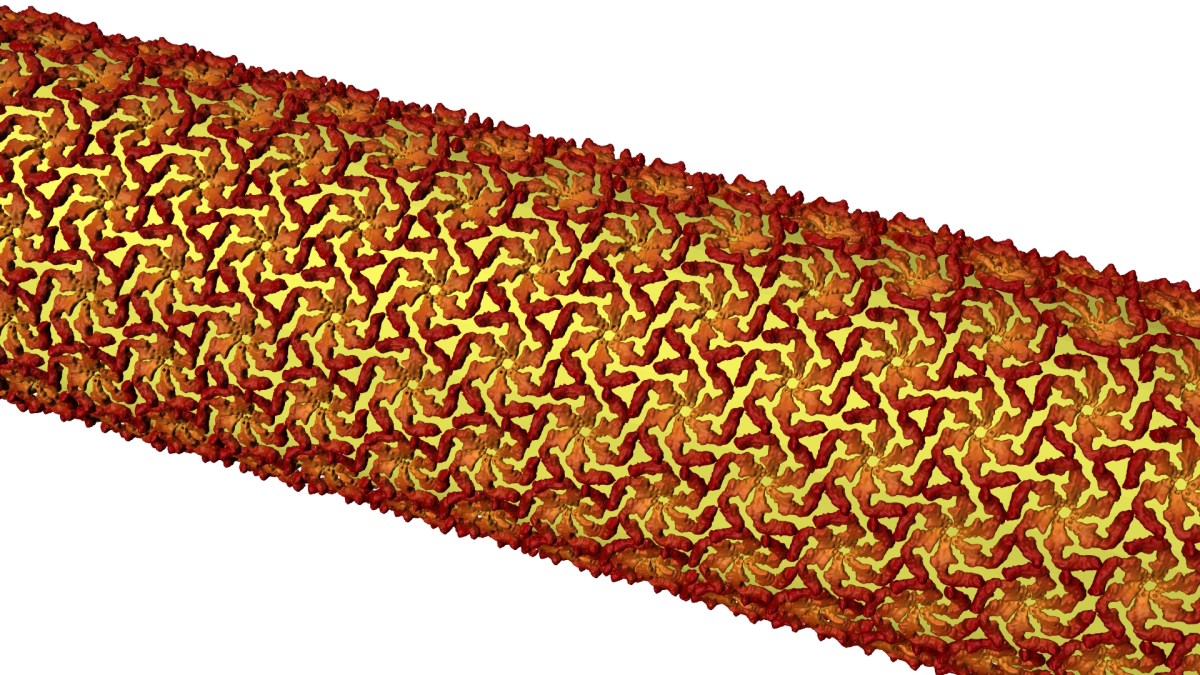

In addition to developing host-targeting drugs, Glenn’s team is developing programmable antivirals that target a virus’s genome structure. After identifying essential RNA secondary structures for a virus, they design or “program” a drug to act against these structures. The aim is to use the virus’s own biology against itself, limiting its ability to mutate to escape the effect of the drug.

Glenn and his collaborators have developed such antivirals for influenza A and COVID-19 and have shown drug efficacy in animal models, but not in people yet.

In the influenza study, a single intranasal injection of the antiviral allowed mice to survive a lethal dose of influenza A virus — when the drug was given 14 days before or even three days after viral inoculation. Additionally, the antiviral provided immunity against a tenfold lethal dose of influenza A given two months later.

“We call this a single-dose preventive, therapeutic, and just-in-time universal vaccination that works against all influenza A virus strains, including drug-resistant ones,” says Glenn. “The primary goal is to prevent a severe influenza pandemic, but the same drug could be used for regular seasonal flu.”

Preparing for Future Pandemics

Glenn hopes the current pandemic is a wake-up call to better prepare against future pandemics.

“COVID-19 is tragic, but it isn’t what keeps me up at night,” admits Glenn. “We are extremely vulnerable to a highly pathogenic, drug-resistant influenza virus. That fear is really what motivates us.”

Fear of an influenza and other serious pandemics also inspired Glenn to start ViRx@Stanford, a Stanford Biosecurity and Pandemic Preparedness Initiative. Its goal is to proactively build up our collective antiviral tool kit to protect against future pandemics.

ViRx@Stanford’s subsection SyneRx was recently selected as one of nine Antiviral Drug Discovery Centers by the National Institutes of Health. Stanford’s center will involve more than 60 faculty and consultants working on seven research projects and three scientific cores. And ViRx@Stanford is now expanding, establishing hubs in Vietnam, Israel, Brazil, Singapore, and beyond.

“Innovative drug development is expensive. This is the kind of support that can actually help us do what we’ve never been able to do here before,” says Glenn. “The goal of all of this is to develop real-world drugs that can make a big difference for patients across the world. And I think we’re on track to do that.”

This is a reposting of my feature article in the recent Stanford Medicine annual report, courtesy of Stanford Medicine.